Continuing our M-AI summit 2018 presenters series, in today’s article we’re introducing Mohsen Kaboli. Mohsen Kaboli is a postdoctoral researcher and TUM-IAS fellow at the Institute for Cognitive Systems (ICS), the Technical University of Munich. He is the co-founder of TUM RoboCup soccer team and has been awarded a Ph.D. in robotics, and tactile sensing with the highest distinction (summa cum laude) from the Technical University of Munich (TUM) in 2017. He received his Master’s degree in signal processing and machine learning under the supervision of Prof. Danica Kragic from the Royal Institute of Technology (KTH), Sweden in 2011, and in April 2013 he was awarded a three-year Marie Curie scholarship to pursue a Ph.D. at the Institute for Cognitive Systems (ICS). In March 2012, he received an internship scholarship from the Swiss National Foundation for 18 months in order to continue his research as a research assistant at the Idiap lab, Ecole Polytechnique Federale de Lausanne (EPFL), Switzerland. From September 2015 through January 2016, Kaboli spent 5 months as a visiting research scholar at the Intelligent Systems and Informatics lab (ISI) directed by Prof. Yasuo Kuniyoshi at the University of Tokyo, Japan. He has also been a visiting researcher at the Human Robotics lab, the department of Bioengineering at the Imperial College London supervised by Prof. Etienne Burdet from February through April 2014.

We were very excited for Kaboli to present at the MunichAI Summit 2018 on the Sense of Touch in Robotics. Together with his team Kaboli built a 9-month-old baby robot that could hear and see objects. In the video presented he shows how the baby robot is learning like a human, that is to say, just like a human it’s learning through play. For example, the robot generates some random movements, trying to grasp an object. Kaboli brings audience’s attention to the fact that one sensory modality is missing in this baby robot. And that is the sense of touch. The robot is not able to sense the environment, it doesn’t know if its own feet are on the floor or what its surroundings are.

‘’The idea was to make this robot sense much’’ says Kaboli.

‘’Sense of touch in human plays a significant role since in 1 cm we have 200 tactile receptors we can feel the pressures, vibration and when we have to get information about the objects we need to interact with them, we need, for example, to slide our finger to realize the texture of the object or properties, stiffness’’ presents Mohsen.

There is sensory information the human body can’t get from solely vision. What Kaboli and his team tried to build was electronic skin, a robotic hand which closely mimics the human hand.

‘’We made them feel touch. It means they are able to measure normal force, temperature’’.

As a co-author of a paper published in International Journal of Humanoid Robotics, Kaboli states that touch is perhaps the most overlooked sense. Everyone receives tactile information about the world around them at every second of the day including grasping and manipulation, assessing object properties, and determining the underlying emotion associated with a touch gesture.

‘’It is difficult to compensate for a lack of touch through other senses’’ says Kaboli.

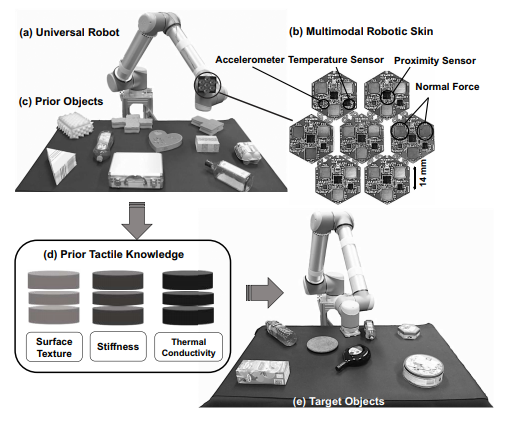

They have further written, in order to emulate a human sense of touch, they have designed and manufactured multimodal tactile sensors to provide robotic systems with the ability of pre-touch and sense of touch. ‘’Each skin cell has one microcontroller and a set of multi-modal tactile sensors, including one proximity sensor, one three-axes accelerometer, one temperature sensor, and three normal-force sensors. All skin cells are directly connected with each other via bendable and stretchable inter-connectors’’.

Kaboli also shared plans that future BMW cars will be designed with touchable commands, including using touch to park the car or doing some other actions.

He further explains that when building AI or cognitive systems they should have at least the following four parts to mimic humans: perception, since humans perceive information via vision or touch, interaction with environmental objects since humans can feel objects they interact with, the ability to learn, since humans use their interactions to learn and make decisions, and lastly planning and reasoning since humans continue to evolve their decision making skills as new information is received.

How can a robot recognise objects based on touch without having vision? Kaboli clarifies that there are two physical properties a robot can recognise within the object: textual properties and center of mass. These properties allow the robot to grasp and manipulate the object. To understand further how Kaboli and his team’s work is designed you can watch his full talk on our YouTube page.

Develandoo is grateful to Mohsen Kaboli and his colleague for their presentations, and the work they are doing to change the way we think about AI and robots. Their presence was real contribution to making our summit more productive and complete.

- Topics:

- Artificial Intelligence

- M-AI Summit